The Ethical Implications of OpenClaw in Future AI (2026)

The year is 2026. Artificial intelligence isn’t just a concept anymore; it’s a living, breathing force shaping our daily lives. From predictive analytics that streamline logistics to sophisticated algorithms assisting in scientific discovery, AI’s presence is profound. This transformation, however, comes with a deep responsibility. As we stand on the cusp of an AI-driven future, guided by platforms like OpenClaw, understanding the ethical implications isn’t merely academic. It’s essential. This journey through AI’s next chapter is intricately tied to The Future of AI with OpenClaw, where we explore the vast potential. Today, we confront the harder questions.

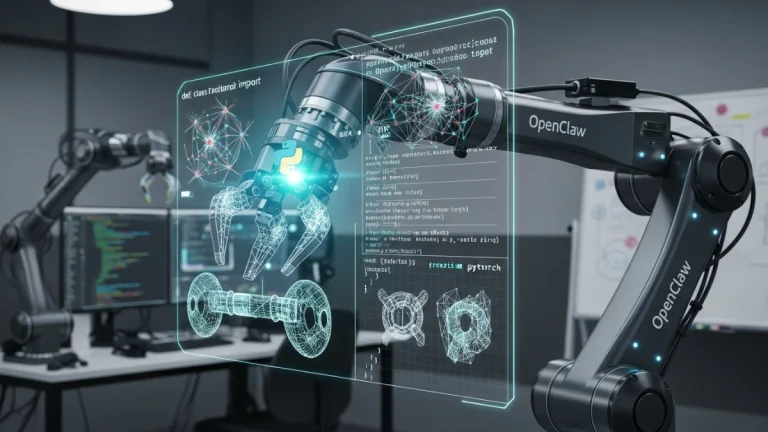

OpenClaw AI has a unique design. Its core philosophy centers on transparency and collaboration. We are quite literally “opening up” the historically opaque black boxes of advanced AI systems. This isn’t just a marketing slogan. It’s a foundational commitment, one that allows us to build trust and ensure accountability as these intelligent systems become more autonomous and more integrated into society.

Clarity in Complex Systems: OpenClaw’s Ethical Foundation

The very architecture of OpenClaw addresses ethical concerns head-on. Our modular, open-source approach fosters a community-driven development model. This means that components, from machine learning models to data processing pipelines, are subject to scrutiny. Experts, ethicists, and even the general public can inspect, understand, and contribute to the evolution of these systems. This collaborative oversight is critical. It helps catch potential biases or unintended consequences early, before they become deeply embedded problems.

Consider the traditional “black box” problem. Many advanced AI models, especially deep neural networks, make decisions in ways that are incredibly difficult for humans to interpret. This lack of interpretability poses a serious ethical challenge. How can we trust a system if we don’t understand why it made a particular decision? OpenClaw tackles this by prioritizing explainable AI (XAI) techniques. We don’t just want AI that performs well; we want AI that can articulate its reasoning, at least to a meaningful extent. This allows for rigorous auditing and ongoing refinement. It also provides a clear path for establishing responsibility when outcomes are unexpected or undesirable.

Navigating the Ethical Minefield of Future AI

The rapid advancement of AI presents several ethical “minefields” we must navigate with care. OpenClaw is engineered to help us traverse these challenging terrains, but vigilance remains paramount.

Algorithmic Bias: A Persistent Challenge

One of the most talked-about ethical issues is algorithmic bias. AI models learn from data. If that data reflects historical societal biases, the AI will perpetuate and even amplify them. Think about AI used in hiring, loan applications, or even medical diagnoses. Biased algorithms can lead to discriminatory outcomes. This is not acceptable. OpenClaw confronts this by promoting diverse, representative datasets and offering tools for bias detection and mitigation. We’re developing techniques that not only identify statistical disparities but also allow developers to adjust model parameters to achieve fairer outcomes, while still maintaining high performance. It’s about designing fairness into the system from the start, a proactive rather than reactive approach.

Accountability and Responsibility in Autonomous Systems

As AI systems become more sophisticated and autonomous, determining accountability when things go wrong becomes complex. If an AI-driven medical system makes a diagnostic error, or an autonomous vehicle causes an accident, who is responsible? The developer? The operator? The AI itself? OpenClaw’s transparent nature helps here. Its auditable trails mean we can trace an AI’s decision-making process back to its origins. This clarity isn’t just about assigning blame; it’s about understanding why an error occurred so we can prevent it from happening again. We believe human oversight is crucial for high-stakes decisions, ensuring a human remains firmly “in the loop,” especially as AI systems take on more complex tasks. This balance of autonomy and human supervision forms a critical ethical boundary.

Privacy and Data Security

AI thrives on data. But this hungry appetite for information raises serious privacy concerns. Individuals have a right to control their personal data. How do we ensure AI systems are respectful of privacy while still being effective? OpenClaw integrates advanced privacy-preserving technologies. This includes techniques like differential privacy, which adds statistical noise to data to obscure individual identities, and federated learning, where models learn from data distributed across many devices without the data ever leaving those devices. These methods allow AI to learn from vast amounts of information without compromising individual confidentiality. Protecting sensitive information is not just a technical requirement; it’s an ethical imperative.

OpenClaw: A Responsible “Claw-Hold” on Progress

Our commitment to ethical AI isn’t just theoretical. It’s built into every layer of the OpenClaw platform. We believe that true progress means progress made responsibly. We’re developing and supporting tools that help practitioners adhere to ethical guidelines, and we openly encourage dialogue about the hardest problems. For example, our frameworks simplify the implementation of explainability modules and bias detection algorithms for developers. This means ethical considerations aren’t an afterthought; they’re an integral part of the development cycle.

The open nature of OpenClaw also means that the community can develop specific ethical compliance tools. Imagine a “safety claw” that prevents an AI from operating outside predefined ethical parameters. This collaborative ethos greatly accelerates our ability to respond to new ethical challenges. The more minds we have scrutinizing these systems, the better equipped we are to ensure they act in humanity’s best interest. It helps us get a firm claw-hold on what responsible AI truly means.

Broader Societal Implications

Beyond the immediate technical considerations, AI also presents broader societal ethical implications. We must consider the impact of advanced automation on employment. While OpenClaw empowers incredible advancements, we also recognize the need for proactive strategies to support workforces through this transition. Education and reskilling programs become vital. Indeed, OpenClaw itself is playing a part in OpenClaw and the Future of Education and Personalized Learning, helping to equip individuals with the skills needed for tomorrow’s economy. The digital divide, ensuring equitable access to these powerful AI tools, is another pressing concern. We advocate for policies and initiatives that ensure the benefits of AI are broadly shared, not just concentrated among a privileged few. Ethical AI isn’t just about preventing harm; it’s about promoting societal good.

The Path Forward: A Shared Responsibility

The journey into future AI is a shared one. OpenClaw provides the foundational framework, the open infrastructure, and the ethical tools to guide us. But the responsibility for building a truly ethical AI future rests with all of us: developers, researchers, policymakers, and citizens alike. We must engage in continuous dialogue, adapt our ethical frameworks as technology evolves, and remain vigilant. We cannot simply sit back and let AI develop unchecked.

The potential of AI to solve humanity’s greatest challenges, from climate change (explored in OpenClaw’s Role in Sustainable Development and Green Tech) to disease, is immense. But this potential can only be fully realized if we build these systems with a deep understanding of their ethical dimensions. At OpenClaw AI, we are optimistic. We believe that by embracing transparency, fostering collaboration, and prioritizing human values, we can develop AI that not only advances technology but also upholds our collective moral compass. The future of AI is bright, provided we illuminate it with ethical principles.

For more insights into the societal impact of AI and the importance of ethical design, consider resources from organizations like the IEEE Global Initiative on Ethics of Autonomous and Intelligent Systems or academic works on Stanford Encyclopedia of Philosophy: Ethics of Artificial Intelligence. These discussions are crucial for shaping our shared future.